Background

First off, many kudos to fellow MVP Michael Van Horenbeeck, who published this article that got me started down the road to success. Then, I suggest reading the NextHop article on the very similar subject.

Then, just to make sure everything is understood, read the official Lync documentation on Reverse Proxy. Once you wade through that, you will also need to get a solid re-read of the Van Horenbeeck piece. Because we are going to be using Kemp LoadMaster for this exercise, I strongly suggest reading the following:

Further reading here may help you out also. However, with any luck this will not be an issue if you keep current on your Kemp firmware.

Scenario – what is the point?

As IPv4 address availability becomes tighter and tighter, more customers are starting to seriously push the question: “are all those public IP addresses REALLY needed?” Most, if not all, of my clients already have an external IP being used for Exchange services. Well, the answer is, no, not really, but it depends (as always). For a smaller deployment, a single external IP can work. I would prefer to see TWO (2) external IP, one for Lync SIP, WC, and AV, and one for Reverse Proxy. While we can use the external IP already serving Exchange web services, this article will demonstrate a single external IP for all Lync and Exchange.

As a huge point; this is an exercise in proving something can be done. There are parts and pieces that I hope will be useful to someone else. However, this solution may not be well suited for an actual environment because the Lync Edge ports have moved away from something that a corporate firewall will typically allow. Specifically using 444, 446, and 447 for Lync Edge services will usually cause federation to other entities to fail. Using this solution set for Lync Edge may also cause issues with Audio/Video media traffic. If you don’t need/want federation, then the entirety of this may be on target. That is not the norm for what *I* see and I caution you to think this through before trying this in a production environment. Remember my comment above about how I would want two public IP’s? This is the reason. YMMV.

As a final explanation, what I will demonstrate here is probably NOT a supported configuration per se, but there is no reason to think that anything here is unsupported just because this configuration is not explicitly called out in the documentation.

If you read between the lines carefully, you will also see that the existing Exchange web services IP could easily double up for the Exchange and Lync web services. You may also note that I have two complete Exchange organizations being supported with this environment- one with Lync, and one with no Lync. I will not cover the setup of the routing for the core Lync Edge services, but if you take a look at the following environment diagrams, you will see what is needed there and this article will concentrate on the configuration of the Kemp (or any other HLB) to handle the web services.

As a side note, I use Kemp because of the ease of deployment. I find that the configuration and deployment tasks are very straightforward. This is not to say that an existing HLB can’t accomplish these tasks, but I don’t have specific knowledge of those other devices. The concepts will be the same, but the tasks to achieve the same outcomes on different hardware will be different.

If you have gotten this far, and you still want to move forward, let’s do it.

Environment

Here is our exercise environment to accomplish what we want. Note that I have ignored some of the traffic streams in this view; what is important is understanding the relationship between the domains, the load balancer/reverse proxy, and the subnets.

Once you have that digested, take a look at this one, and then go back and forth until you have a solid understanding. This has a good deal more detail; it shows all the ports and traffic types. As a logical diagram, the load balancer is sitting in the middle of all this; specifically dealing with all the web-based traffic and parceling out the traffic based on rules. The load balancer has one leg in each network; its’ default network is the 10.10.10.0 net and the default gateway is, as expected, on the default network.

Certificates

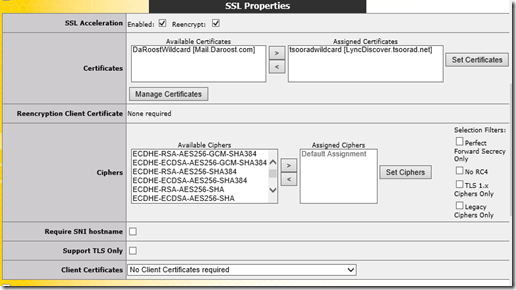

As you might think, the load balancer is going to want a certificate or two so that it can represent itself to clients as the service requested, decrypt the SSL stream as needed, and then re-encrypt before sending it down to the real servers that make up the virtual service. Because these certs were wildcards and my intent was only to use them on the load balancer, I used the Digicert Cert utility (what a nice piece of gear) to create, import, and export the certificates. The Kemp does not seemingly care about trusted roots so both of my internally generated certificates worked just fine. In my case, I generated two wildcard certificates, one from the CA in each domain. In a real world situation, I think that a public wildcard would work better.

Here are the PKI certificates installed on the Kemp. Note that the domain1 certificate is only assigned to the one Virtual Service while the domain2 certificate is assigned to both. This is because the VS on the 10.10.10.0 network needs to handle URL’s from both domain1 and domain2, while the VS on the 1.1.1.0 network only needs to handle domain2.

Content Rules

At this point, the content rules are needed. You did read all the documentation mentioned above, yes? If not, I suggest you do so now. We’ll wait right here until you get that done.

Here are the content rules needed. Notice how some of them appear as both .net and .com. I have two separate Exchange instances running so I need to differentiate – hence some of the content rules get doubled with the not-so-subtle URL change between .net and .com. You can refer back to Mr. Van Horenbeeck’s article and get really fancy with these rules, but I went with the brute force simple method.

Virtual Services

Now we get to make some Virtual Services. Did you really read all that documentation? Good. I am horrible on reading documentation, and it bites me almost every time. You would think that I would learn, eh wot? Apparently not. Adelante! Here is the VS construction.

Note that VS 3, 4, and 5 are blue? Because they have subVS instances living under them. Here is the same stack, expanded.

Observe that while the far left column indicates the landing spot of the client on 443, the “Real Servers” column indicates the underlying server and the port changes, if any. I think the virtual services homed on the 1.1.1.0 net are self-explanatory – well, except for maybe #3. So let’s look at that one, and once you understand that, I think the 10.10.10.58:443 VS will make sense also. Remember that external URL requests landing on the external VS need to be translated from 443 to 4443 and from 80 to 8080.

The interesting part is the “Advanced Properties” section where doodly-squat is done until AFTER the subVS object are created. At which point you need to cycle back to this level and enable content switching. You did read that stack of documents, yes? Oddly, when creating the subVS objects, I had some inconsistent results when assigning the content rules which sometimes necessitated enabling the content rules at each level in reverse order, and sometimes as described in the documentation. Perhaps it was just me. But know that, in the end, the Kemp needs to look like this or traffic will go nowhere even if the VS does ping back at you.

Here is subVS “LSWebInt” in detail. And yes it looks very much like the top level. And is configured with the same items and settings. Except note that the certificate is now missing. Because the top layer is handling that slice of life.

Another important piece is for you to start comparing the “Selection Rules” as indicated in the pictures. Note that the numbers of rules match up. Now, I would have thought that assigning a rule at the bottom level would trickle up through the construction and be reflected at each layer above. But….NO! So, when putting all this together, make sure you know what goes where; the Kemp WUI is not going to keep track of it for you. If you add a rule at the subVS level on ONE of the real servers, do not expect the other server to know about it, don’t expect the Advanced Properties to magically catch on to things, and the high level VS won’t get it either. Conversely, if you assign rules at the top, do not expect those rules to flow down logically just because there is an underlying service.

Grrrr. Make yourself a table that lays out what you need, where it comes from and needs to go, and what content rule controls that. You do document things, right? If not, don’t come crying to me later not knowing what you did several months back and now don’t understand. Adelante.

The Big Picture

You might be interested to know how I am running both sets of SMTP traffic through only one port 25 connection? External Relay domain from domain1 to domain2. A tad clunky, but hey, I only have one IP to work with (I’m cheap but not easy). And I want to reinforce the Lync Edge in all this. I am using single IP Edge, with ports 444, 446, and 447 for SIP, WC, and AV services. And again, this is NOT recommended if you are expecting ubiquitous federation to work. Most of the world will expect to be able to talk on 443 to your domain, and this construction is snaking all 443 to the load balancer for Exchange and Lync web services.

With all 443 traffic landing in one spot, we can use Kemp (or any other load balancer or device/appliance that supports this concept) Content Rules to send the URL to where we need it to go. Routing and network relationships need to be figured out in advance, as do the Content Rules and the subVS’s that are actually handling the traffic.

While this exercise did a bit more than is normal for an organization, what with two Exchange organizations and only one Lync environment and using only one public IP, I think the point is made about bringing all 443 traffic to one spot and having (Kemp in this case) something decide where to send the traffic based on requested URL. If I had more resources, and wanted to do this for a real organization, I would use one IP for Lync SIP, WC, and AV, and use another public IP for all 443 traffic. Thereby saving precious IP resources which was the original goal.

YMMV.